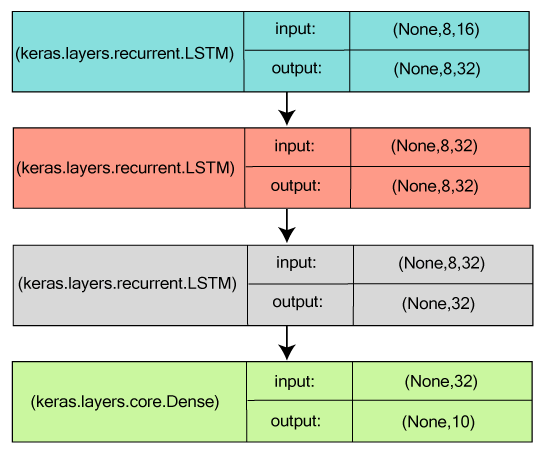

Stacking recurrent layers are used to increase the capacity of a neural network until overfitting becomes a primary obstacle that is even after using dropout to mitigate the overfitting. In the previous blog post we have studied this case by using Tensorflow with Convolutional Neural networks here. We understand the cases which recurrent neural networks are best suited for. In our neural network we will implement and use the following architectures and techniques: bidirectional GRU, stacked (multi-layer) GRU, dropout/spatial dropout, max-pooling, avg-pooling. Familiarize yourself with PyTorch concepts and modules. All of your networks are derived from the base class nn. Our model will be a feed forward neural network that takes in the difference between the current and previous screen patches. See the posters presented at ecosystem day 2021. fig1 : Recurrent Neural Network ( Network with self loop) Simply,we feed back the output of the previous time frame to the next time frame in the network. As an important class of SNNs, recurrent spiking neural networks (RSNNs) possess great computa-tional power. We will use something called a continuous time recurrent neural networks, which is essentially kind of the So now we have a network. However, do not fret, Long Short-Term Memory networks (LSTMs) have great memories and can remember information which the vanilla RNN is unable to! Summary. 2 release includes a standard … There is a temporal dependency between such values. It is primarily used for applications such as natural language processing. PyTorch Forecasting is a PyTorch-based package for forecasting time series with state-of-the-art network architectures. This is illustrated using an implicit recurrent connection for the decay of the membrane Feed-forward neural networks (FFNNs) - such as the grandfather among neural networks, the original single-layer perceptron, developed in 1958- came before recurrent neural networks.

One of the main differences with modern deep learning is that the brain encodes information in spikes rather than continuous activations. The idea of graph neural network (GNN) was first introduced by Franco Scarselli Bruna et al in 2009. Specifically, we will implement an MPNN to predict a molecular property known as blood-brain barrier permeability (BBBP). The state of the art architectures are being launched for PyTorch framework. Motivation: as molecules are naturally represented as … In the third notebook, the bidirectional Gated Recurrent Unit model will be built. It builds on open-source deep-learning and graph processing libraries. I am not sure whether my code is right or wrong.

h_0 is the initial hidden state of the Recurrent Neural Network Tutorial, Part 2 - Implementing a RNN in Python and Theano - GitHub - dennybritz/rnn-tutorial-rnnlm: Recurrent Neural Network Tutorial, Part 2 - Implementing a RNN in Pytho Skip to content Toggle navigation. The pytorch tutorials do a great job of illustrating a bare-bones RNN by defining the input and hidden layers, and manually feeding the hidden layers back into the network to remember the state. The dataset we have used for our purpose is multi-variate dataset named Tetouan City Power Consumption available from UCI ML … Speech Recognition with Pytorch using Recurrent Neural Networks 16 minute read Hello, today we are going to create a neural network with Pytorch to classify the voice.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed